Home

Blog

Knowledge

About

Home

Blog

Knowledge

About

Disclaimer: I really am no expert in Python or generative AI - so this is just a script that was the outcome of some experimenting i did. Take it or leave it! I tried adding links to any things that might help you to dive in deeper.

So you want to run Stable Diffusion on your local machine, but you don’t have the latest and greatest graphics card? No problem. In this post you will see how to create a small python script which allows you to create images with “Stable Diffusion 2” that is able to run on a Nvidia Geforce GTX 1060 6 GB graphicscard. By choosing smaller resultions you might even be able to run it on less video memory.

You will need to have Python installed and CUDA modules activated in your kernel. Then install the needed dependencies using pip. Typically you would want to use something like conda to keep your Python packages clean within your operating system. For ease of use and to keep dependencies minimal, I didn’t bother with that here

pip install --upgrade diffusers[torch] transformers xformers

The python script looks as follows.

import torch

from diffusers import StableDiffusionPipeline

from datetime import datetime

pipe = StableDiffusionPipeline.from_pretrained("stabilityai/stable-diffusion-2-1",torch_dtype=torch.float16)

pipe = pipe.to(“cuda”)

pipe.enable_xformers_memory_efficient_attention()

pipe.vae.enable_tiling()

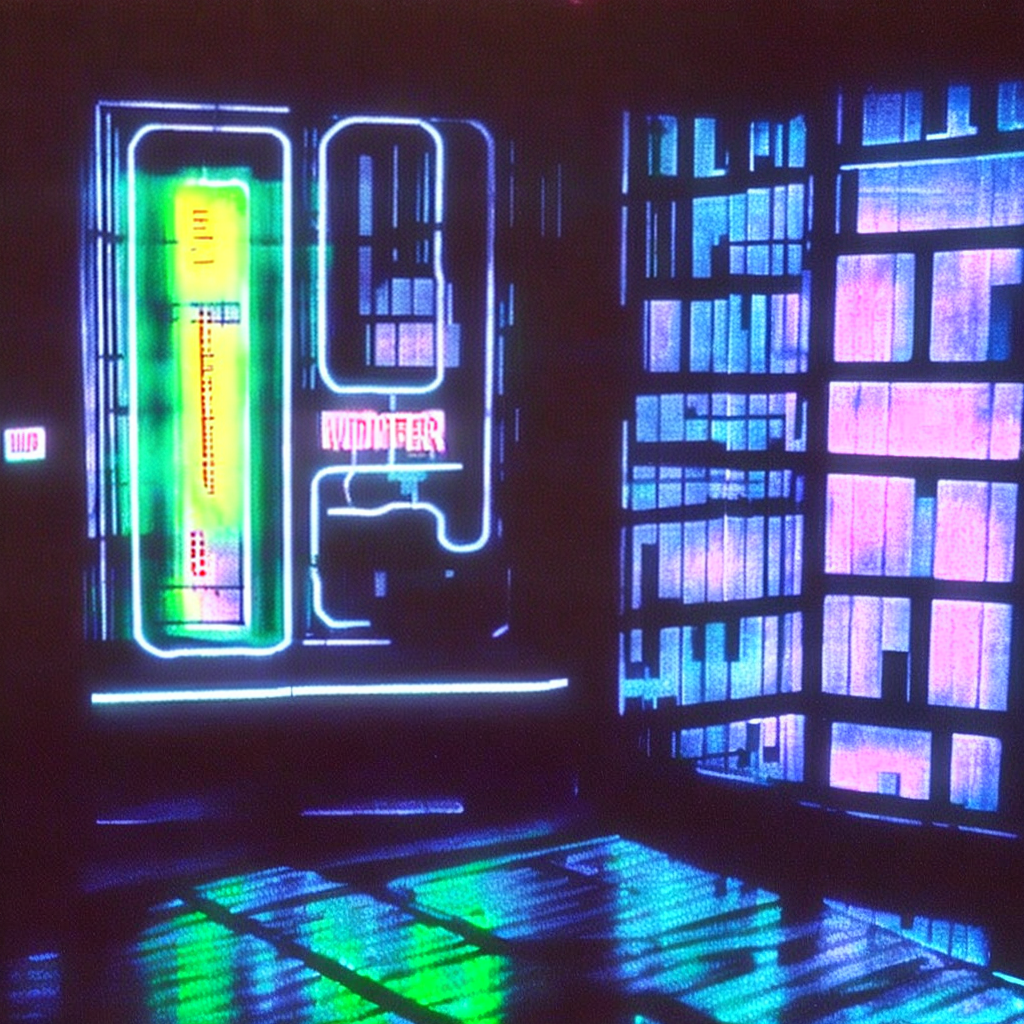

prompt = “Windows 95 running in a bladerunner room at night, outside the windows there is neon advertisement, room is musky”

image = pipe(prompt, width=1024, height=1024, num_inference_steps=100, guidance_scale=20).images[0]

image.save(“output/” + str(datetime.now().timestamp()) + “_” + prompt + “_output.png”)

What does it do?

StableDiffusionPipeline.from_pretrained command we specify that the datatype should be 16 bits. This part is the essential hint on how to run it on GPUs with little video memory.For the fun of it, here some cool images I generated using the script :)

Cheers, Basti.